What Is a Warmup Cache Request? Meaning and Use Cases

Imagine clicking on a website and it loads instantly. No spinning wheel. No awkward waiting. Just speed. That smooth experience often happens because of something called a warmup cache request. It sounds technical, but the idea is simple. It is all about preparing data before someone actually asks for it.

TLDR: A warmup cache request is a request sent in advance to load data into a cache before real users need it. It helps websites and apps load faster and perform better. This technique reduces server strain and improves user experience. It is commonly used in web apps, APIs, eCommerce sites, and high-traffic systems.

Contents

- 1 First, What Is a Cache?

- 2 So, What Is a Warmup Cache Request?

- 3 What Is a Cold Cache?

- 4 Why Are Warmup Cache Requests Important?

- 5 How Does a Warmup Cache Request Work?

- 6 Common Use Cases

- 7 Different Types of Caches That Benefit From Warmup

- 8 Tools Used for Cache Warmup

- 9 When Should You Use Warmup Cache Requests?

- 10 Possible Downsides

- 11 Best Practices

- 12 Real World Example

- 13 Warmup vs Preloading vs Prefetching

- 14 Final Thoughts

First, What Is a Cache?

Before we talk about a warmup cache request, we need to understand cache.

A cache is a temporary storage area. It saves copies of data. That way, the next time someone asks for the same data, it can be delivered much faster.

Think of it like this:

- The main database is a huge warehouse.

- The cache is a small shelf near the counter.

- If something is on the shelf, you get it fast.

- If not, someone has to go to the warehouse.

Going to the warehouse takes time. The shelf saves time.

That is caching in simple terms.

So, What Is a Warmup Cache Request?

A warmup cache request is a request made before real users visit a page or use a feature. Its job is to load important data into the cache.

It “warms up” the cache.

When actual users arrive, the data is already waiting.

No cold start. No delay.

Think of it like warming up a car engine in winter. You start it early. So when you are ready to drive, everything runs smoothly.

What Is a Cold Cache?

A cold cache happens when data is not yet stored in the cache.

The first request has to:

- Travel to the database

- Collect data

- Process it

- Store it in cache

- Return it to the user

This takes longer.

After that first request, the cache becomes warm. Future requests are faster.

A warmup cache request simply handles this first slow step in advance.

Why Are Warmup Cache Requests Important?

Speed matters. A lot.

Here is why warmup caching is useful:

- Improves user experience

- Reduces server load

- Prevents traffic spikes from crashing systems

- Helps during deployments

If 10,000 people visit a website at the same time and the cache is cold, the database may struggle.

If the cache is already warm, the system stays stable.

That is a big difference.

How Does a Warmup Cache Request Work?

Let us break it down into simple steps.

- A script or automated tool sends a request to specific URLs or API endpoints.

- The system processes the request.

- The data gets stored in cache.

- Real users arrive.

- They get the cached version instantly.

This can happen:

- After a server restart

- After deploying new code

- At scheduled times

- Before a major event or promotion

It is proactive, not reactive.

Common Use Cases

1. eCommerce Product Pages

Imagine an online store launching a big sale.

Thousands of users will hit:

- Homepage

- Category pages

- Top product pages

If those pages are warmed up in advance, the site stays fast during traffic spikes.

2. News Websites

Breaking news spreads quickly.

A popular article may suddenly receive massive traffic.

Media sites often warm up:

- Homepage

- Trending stories

- Featured articles

This ensures readers get instant access.

3. API Services

APIs power apps and integrations.

Some endpoints are used more than others.

Developers can send warmup requests to:

- Load frequent queries

- Cache authentication tokens

- Prepare configuration data

This keeps API response times low.

4. After Deployment

When new code is deployed, caches are often cleared.

This leads to a cold start problem.

Instead of letting real users suffer slower speeds, teams run automated warmup scripts.

Users never notice the change.

5. Serverless and Cloud Systems

Serverless platforms sometimes go idle.

The first request wakes them up. This creates latency.

Warmup requests prevent that delay.

They keep functions responsive.

Different Types of Caches That Benefit From Warmup

Warmup techniques can apply to various caching layers:

- Browser cache – stores static assets like images and CSS

- CDN cache – distributes content globally

- Application cache – stores computed results

- Database cache – keeps frequent queries ready

Each layer can be warmed independently.

Large systems often use multiple layers together.

Tools Used for Cache Warmup

There are various tools and approaches depending on the stack.

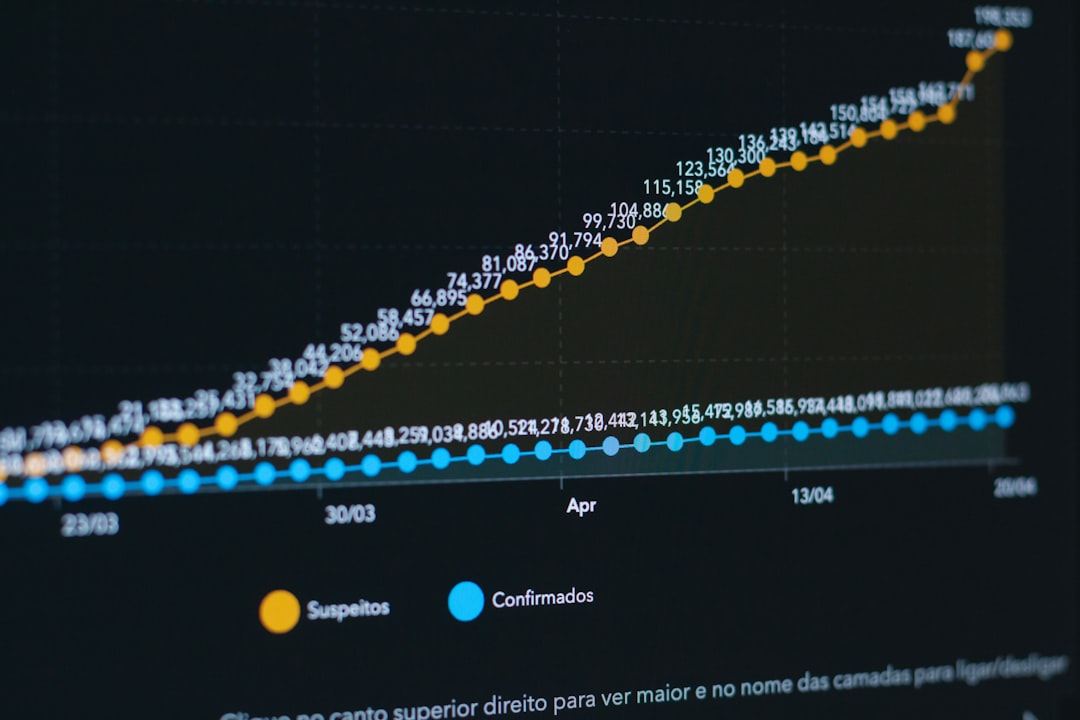

Below is a simple comparison chart.

| Tool / Method | Best For | Automation Level | Complexity |

|---|---|---|---|

| Custom Scripts (Python, Node) | Targeted URL warming | High | Medium |

| cURL Requests | Simple manual warmups | Low | Low |

| CI CD Pipeline Jobs | Post deployment warmup | High | Medium |

| Load Testing Tools | Large scale warming | High | High |

| CDN Prefetch Features | Static and global content | High | Low |

Some teams keep it simple. Others build fully automated systems.

When Should You Use Warmup Cache Requests?

Not every system needs it.

You should consider it if:

- You expect traffic spikes

- You deploy updates frequently

- Performance is critical

- Your database gets overloaded easily

Small blogs may not need proactive warmups.

Large SaaS platforms almost always do.

Possible Downsides

Warmup cache requests are helpful. But they are not perfect.

Here are a few things to consider:

- Extra server load during warmup

- Wasted resources if data is never used

- Complexity in automation

If you warm everything all the time, you might waste computing power.

Smart teams warm only what matters.

Best Practices

Want to do it right? Follow these simple tips:

- Identify high traffic endpoints

- Automate warmups after deployments

- Schedule warmups during low traffic periods

- Monitor performance before and after

- Avoid warming rarely used pages

Measure results. Always.

Data beats guesswork.

Real World Example

Let us say you run an online ticketing platform.

A famous band announces a concert.

Tickets go on sale at 10 AM.

You expect 200,000 users within minutes.

Here is what you do at 9:55 AM:

- Warm homepage cache

- Warm event page cache

- Warm seating map data

- Warm pricing API endpoints

At 10 AM, users rush in.

The system stays stable.

Without warmup, the first wave might overload your database.

That could mean crashes.

Warmup prevents chaos.

Warmup vs Preloading vs Prefetching

These terms sound similar. But they are slightly different.

- Warmup – Loads data into server cache before real use

- Preloading – Loads resources early for current session

- Prefetching – Loads resources likely needed soon

Warmup is usually server-side.

Preloading and prefetching are often client-side.

All aim to improve speed.

Final Thoughts

A warmup cache request is simple in concept. But powerful in impact.

It prepares your system for real users.

It reduces waiting time.

It protects databases from overload.

It improves user satisfaction.

In a world where users expect instant results, preparation matters.

You could wait for the first user to warm your cache.

Or you could be ready.

The fastest systems choose to be ready.