Edge AI Deployment Tools That Help You Run AI Models On Edge Hardware

Artificial intelligence is no longer confined to massive cloud data centers. From smart cameras and autonomous drones to medical wearables and industrial sensors, intelligent systems are increasingly running directly on edge hardware. This shift toward Edge AI is transforming how devices process data—bringing computation closer to the source, reducing latency, and enhancing privacy. However, deploying AI models on resource-constrained devices introduces unique challenges that demand specialized tools and frameworks.

TLDR: Edge AI deployment tools help developers optimize, convert, and run machine learning models efficiently on local devices like IoT sensors, smartphones, and embedded systems. These tools handle model compression, hardware acceleration, compatibility, and runtime management. Popular solutions include TensorFlow Lite, ONNX Runtime, NVIDIA TensorRT, OpenVINO, and AWS IoT Greengrass. Choosing the right deployment tool depends on hardware constraints, performance needs, and ecosystem integration.

Contents

- 1 Why Edge AI Requires Specialized Deployment Tools

- 2 Key Features of Edge AI Deployment Tools

- 3 Popular Edge AI Deployment Tools

- 4 Special Considerations for Microcontrollers

- 5 Model Lifecycle Management on the Edge

- 6 Security and Privacy Benefits

- 7 Challenges in Edge AI Deployment

- 8 How to Choose the Right Tool

- 9 The Future of Edge AI Deployment Tools

Why Edge AI Requires Specialized Deployment Tools

Unlike cloud servers with virtually unlimited computing power, edge devices operate under strict constraints:

- Limited memory and storage

- Reduced computing power

- Energy efficiency requirements

- Real-time processing demands

As a result, deploying AI models to edge hardware is not as simple as exporting a trained neural network. Models must be optimized, compressed, converted, and sometimes restructured. Edge AI deployment tools automate and streamline this process.

These platforms help bridge the gap between model development and real-world deployment by providing:

- Model quantization and compression

- Hardware-specific acceleration

- Cross-platform compatibility

- Efficient runtime execution

- Security integration

Key Features of Edge AI Deployment Tools

1. Model Optimization and Quantization

One of the most important deployment capabilities is model optimization. Large neural networks trained in the cloud often need to be reduced in size before running on edge hardware.

Common optimization methods include:

- Quantization: Converting 32-bit floating-point weights into 16-bit or 8-bit integers to reduce memory and computation requirements.

- Pruning: Removing redundant model parameters.

- Weight sharing: Compressing models by grouping similar weights.

These techniques can significantly reduce model size while maintaining acceptable accuracy levels.

2. Hardware Acceleration Support

Many edge devices include specialized AI accelerators such as GPUs, NPUs, TPUs, or DSPs. Deployment tools often provide native support to leverage these accelerators efficiently.

Hardware acceleration ensures:

- Lower latency inference

- Improved power efficiency

- Optimized execution pipelines

Without proper acceleration integration, even optimized models may fail to meet performance expectations.

3. Cross-Platform Model Conversion

AI models are typically trained in frameworks such as TensorFlow, PyTorch, or Keras. However, edge devices may require a different runtime format. Deployment tools allow conversion across formats, ensuring models can run across platforms without rebuilding them from scratch.

4. Lightweight Runtime Engines

Edge AI frameworks often include minimal runtime environments that execute models efficiently without unnecessary dependencies. A lightweight runtime is crucial for embedded environments with limited system resources.

Popular Edge AI Deployment Tools

TensorFlow Lite

TensorFlow Lite (TFLite) is one of the most widely adopted tools for deploying machine learning models on edge and mobile devices. It supports Android, iOS, microcontrollers, and embedded Linux systems.

Key features include:

- Post-training quantization tools

- Support for Edge TPU acceleration

- Small binary size for low-memory devices

- Microcontroller-specific variant: TensorFlow Lite for Microcontrollers

TFLite is especially valuable for developers already working within the TensorFlow ecosystem.

ONNX Runtime

ONNX Runtime enables cross-framework deployment by using the Open Neural Network Exchange (ONNX) format. It allows models trained in PyTorch, TensorFlow, and other frameworks to run across multiple hardware backends.

Advantages:

- Broad hardware compatibility

- Optimized inference engine

- Support for CPU, GPU, and edge accelerators

ONNX stands out for its interoperability, making it ideal for heterogeneous environments.

NVIDIA TensorRT

For edge devices powered by NVIDIA GPUs or Jetson platforms, TensorRT provides deep optimization and high-performance inference capabilities.

It offers:

- Graph optimization and layer fusion

- Precision calibration (FP16 and INT8)

- GPU acceleration tuning

TensorRT is particularly well-suited for high-performance applications such as robotics, autonomous vehicles, and smart video analytics.

Intel OpenVINO

OpenVINO is optimized for Intel hardware, including CPUs, integrated GPUs, and VPUs. It simplifies model optimization and accelerates inference on supported Intel chips.

Main benefits:

- Model optimizer tool for conversion

- Pre-trained model zoo

- Cross-device execution support

OpenVINO excels in industrial automation, healthcare imaging, and retail analytics environments using Intel infrastructure.

AWS IoT Greengrass and Azure IoT Edge

Cloud providers also offer hybrid edge deployment solutions. Tools like AWS IoT Greengrass and Azure IoT Edge extend cloud AI services to local devices.

These solutions provide:

- Remote device management

- Secure model updates

- Edge-to-cloud synchronization

- Containerized deployment support

They are ideal for organizations that want centralized monitoring with distributed intelligence.

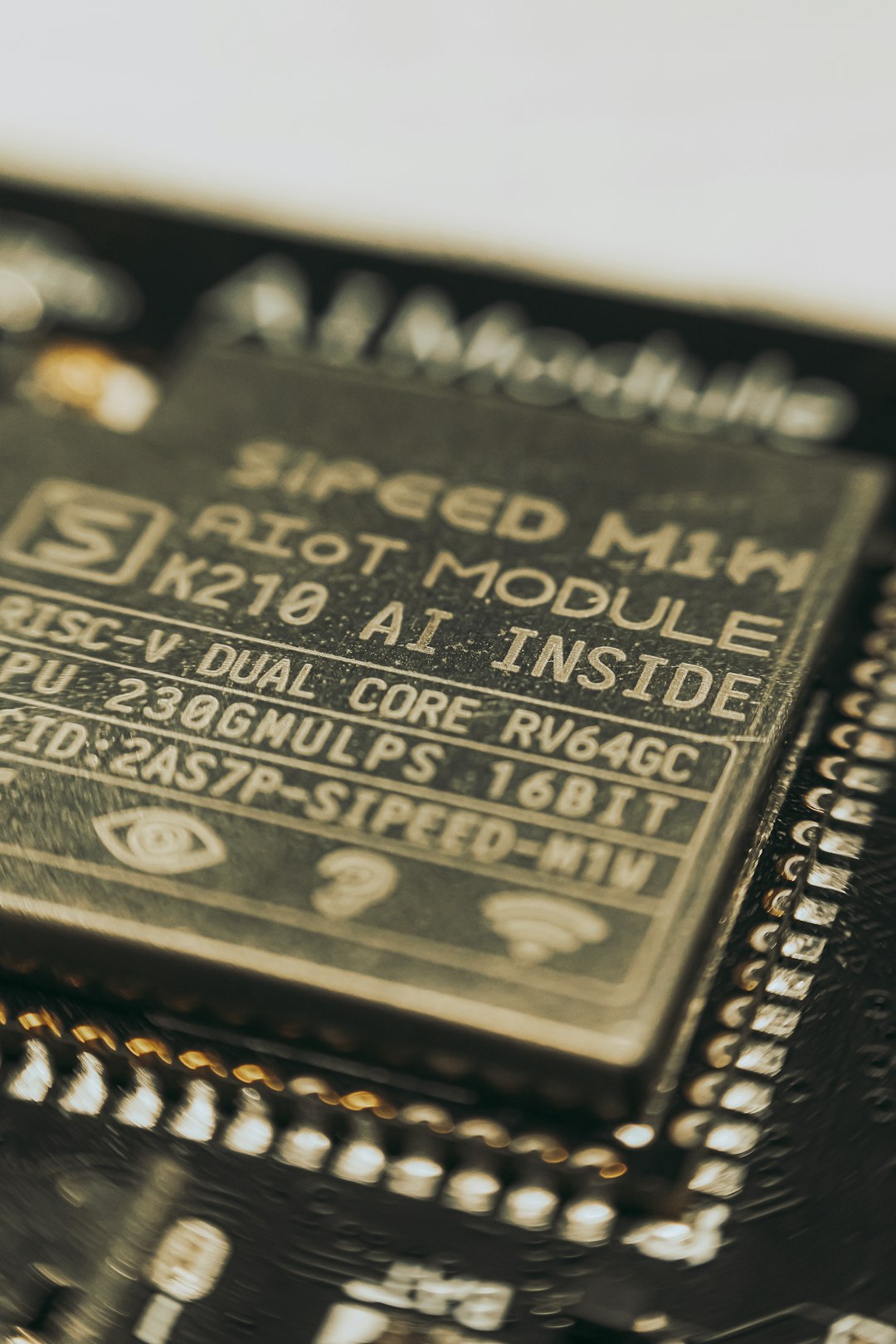

Special Considerations for Microcontrollers

Edge AI isn’t limited to powerful devices like smartphones and mini PCs. Microcontrollers (MCUs) represent an even more constrained category requiring ultra-lightweight tooling.

Deployment tools for MCUs typically focus on:

- Extremely small memory footprints (often under 256 KB RAM)

- Static allocation instead of dynamic memory

- Minimal runtime libraries

Examples include:

- TensorFlow Lite for Microcontrollers

- Edge Impulse deployment SDK

- CMSIS-NN for ARM Cortex-M processors

These tools enable applications such as gesture recognition, anomaly detection, keyword spotting, and environmental sensing directly on sensors.

Model Lifecycle Management on the Edge

Deployment doesn’t end with inference. Edge AI systems must also handle:

- Version control for models

- Secure updates over the air

- Performance monitoring

- Fallback systems in case of model failure

Advanced deployment frameworks integrate with DevOps pipelines, enabling continuous delivery of optimized models to thousands or millions of devices. This approach—sometimes referred to as Edge MLOps—ensures scalability and maintainability.

Security and Privacy Benefits

Running AI models locally offers distinct advantages in security and privacy:

- Reduced need to transmit sensitive data to the cloud

- Lower exposure to network attacks

- Regulatory compliance for healthcare and financial industries

Many deployment tools embed encryption, secure boot mechanisms, and hardware-backed identity verification. These safeguards are especially important in sectors such as smart cities and medical devices.

Challenges in Edge AI Deployment

Despite powerful tools, deploying AI at the edge remains complex. Developers often face:

- Hardware fragmentation across vendors

- Balancing accuracy vs performance trade-offs

- Limited debugging capabilities

- Power consumption constraints

Choosing the right framework requires a clear understanding of hardware targets, application requirements, and long-term maintenance plans.

How to Choose the Right Tool

When evaluating edge AI deployment tools, consider the following criteria:

- Hardware compatibility: Does the tool support your target chipset or accelerator?

- Model format flexibility: Can it convert and optimize models from your training framework?

- Performance benchmarks: Does it meet latency and throughput requirements?

- Ecosystem integration: Does it integrate with your cloud or device management system?

- Community and support: Is documentation robust and actively maintained?

The best solution often depends on whether your project prioritizes performance, portability, energy efficiency, or scalability.

The Future of Edge AI Deployment Tools

The evolution of specialized AI chips, including neuromorphic and low-power accelerators, is shaping the next generation of deployment platforms. We can expect tighter hardware-software co-design, automated optimization pipelines, and increasingly intelligent runtimes capable of adapting models dynamically based on device constraints.

Additionally, federated learning and on-device personalization are gaining traction. Future deployment tools will likely support local model fine-tuning without centralizing data, enabling smarter and more personalized edge intelligence.

Edge AI deployment tools are not merely optional utilities—they are essential infrastructure in a distributed computing world. As more devices become intelligent and autonomous, the ability to efficiently deploy and manage AI at the edge will define the next wave of technological innovation.